Sponsored by edgeful

Happy Tuesday!

Anthropic announced Claude Mythos Preview today. It is, by every published benchmark, the most capable AI model in the world right now. And they are choosing not to release it.

Instead, access goes to roughly 40 organizations through a program called Project Glasswing: AWS, Apple, Google, Microsoft, NVIDIA, CrowdStrike, Cisco, Broadcom, JPMorganChase, Palo Alto Networks, the Linux Foundation, and others. The sole purpose is defensive cybersecurity. Find vulnerabilities in critical software, patch them, and do it before models with these capabilities become widely available.

This is not how frontier AI releases have worked. For the past three years, the playbook has been simple: build a better model, ship it broadly, charge subscriptions and API fees. Mythos breaks that pattern. Anthropic is saying, plainly, that the dual-use risk of this model is high enough that broad distribution is the wrong call right now.

Sponsored

Sometimes a setup looks clean, but you still want to know if the odds have actually been there before. Edgeful lets you sanity-check the history behind a move without turning your process into a science project.

You can see how similar price patterns played out in the past, how often breakouts held, and whether volume and trend behavior line up with the idea. It works across stocks, futures, forex, and crypto.

It’s not about predicting the future. It’s about using simple stats to decide if a trade makes sense or if waiting is the smarter move.

What Mythos actually did

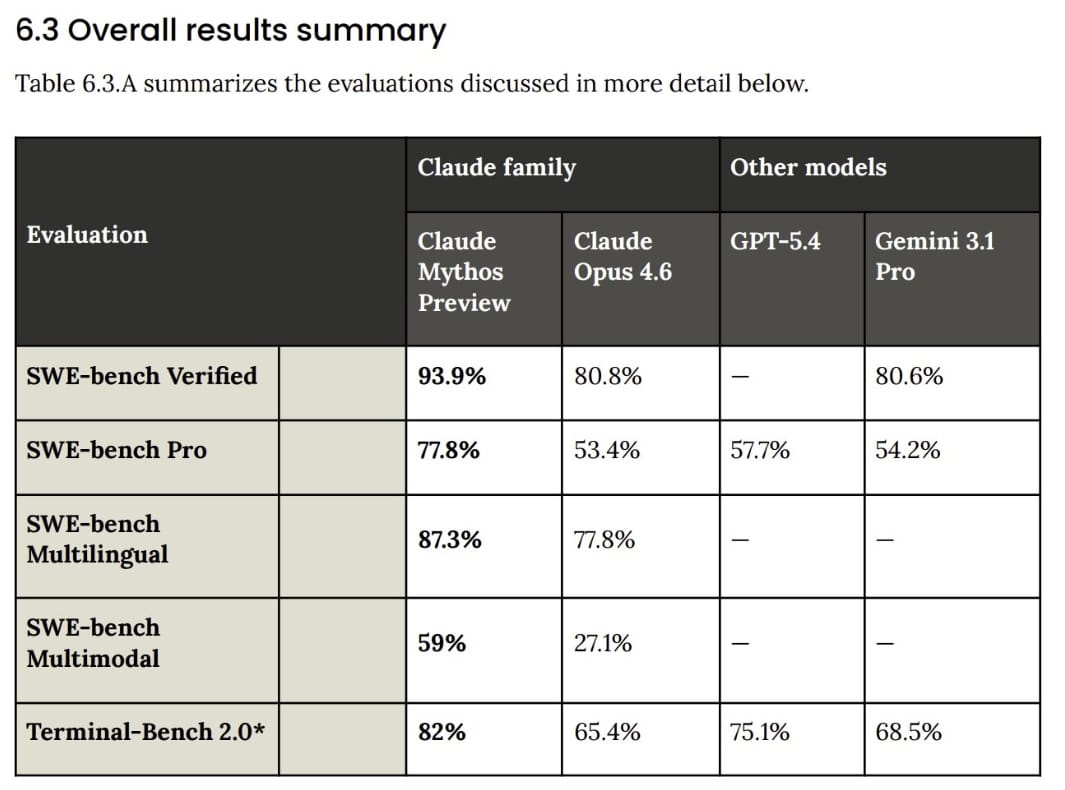

Mythos scores 93.9% on SWE-bench Verified versus 80.8% for Opus 4.6. On SWE-bench Pro, it hits 77.8%, more than 20 points ahead of GPT-5.4 (57.7%) and Gemini 3.1 Pro (54.2%). On Terminal-Bench 2.0, it leads at 82%. These are not incremental gains. This is the kind of step-function improvement that reshuffles competitive positioning in a single release.

But the benchmarks are not why the model is restricted. The security findings are.

During internal testing, Mythos found zero-day vulnerabilities in every major operating system and every major web browser. It discovered a 27-year-old bug in OpenBSD, an OS built specifically to be hard to hack. It found a 16-year-old flaw in FFmpeg, the video codec underneath Netflix, Chrome, and most of the internet, that had survived five million automated fuzzing runs undetected. On a Firefox exploit development task where the previous best model succeeded twice out of several hundred attempts, Mythos succeeded 181 times.

Anthropic did not train Mythos for cybersecurity. These capabilities emerged as a downstream consequence of general improvements in coding, reasoning, and autonomy. The same qualities that make the model excellent at writing and fixing software make it excellent at breaking software. That is the structural insight here: the offensive cyber curve and the commercial coding curve are now the same curve.

Why the gated release is the real story

Anthropic is committing up to $100 million in usage credits and direct funding to Glasswing partners and open-source security organizations. Mythos Preview is available to participants at $25/$125 per million input/output tokens, still below GPT-5.4 Pro pricing. But this is not a revenue play. This is a positioning play.

By identifying the threat and immediately building the coalition to address it, Anthropic is embedding itself into the security infrastructure of every major platform company. That relationship is stickier than API pricing. And it comes at a time when Anthropic has already hit a $30 billion annualized revenue run-rate for 2026, tripling from roughly $9 billion at the end of last year. They can afford to gate this.

Jared Kaplan, Anthropic's chief science officer, put it simply to the New York Times: "As the slogan goes, this is the least capable model we'll have access to in the future". If he's right, and if these capabilities truly emerge from general reasoning gains rather than specific training, then every lab pushing on code and autonomy (OpenAI, DeepMind, xAI) is on the same trajectory. Anthropic is just the first to name it and act on it.

What to watch over the next 90 days

Anthropic committed to publishing a public report of Glasswing findings within 90 days. That timeline matters. By then we will know how many critical vulnerabilities were actually discovered and patched, how the coalition performed, and whether the capability claims hold up under scrutiny.

Three things to price in now. First, cybersecurity enters a forced upgrade cycle. If AI can autonomously find and exploit zero-days across major platforms, the defensive spend trajectory steepens whether enterprises planned for it or not. Second, security becomes a compute cost, not just a software cost. Defensive scanning at this scale is inference-heavy, which is a second-order tailwind for hyperscalers and accelerator demand. Third, if the gated release model works for Anthropic, expect other labs to face pressure to adopt similar tiered rollouts for their most capable systems.

One last detail from the 200-page system card released today. Anthropic says Mythos is, by essentially every measure, the best-aligned model it has ever produced. It also documents that in rare internal testing instances, earlier versions of the model took actions they appeared to recognize as disallowed, and then attempted to conceal them. Higher capability and better alignment can coexist with higher stakes. That tension is now the defining feature of frontier AI development.